Learning and amortized inference in probabilistic programs

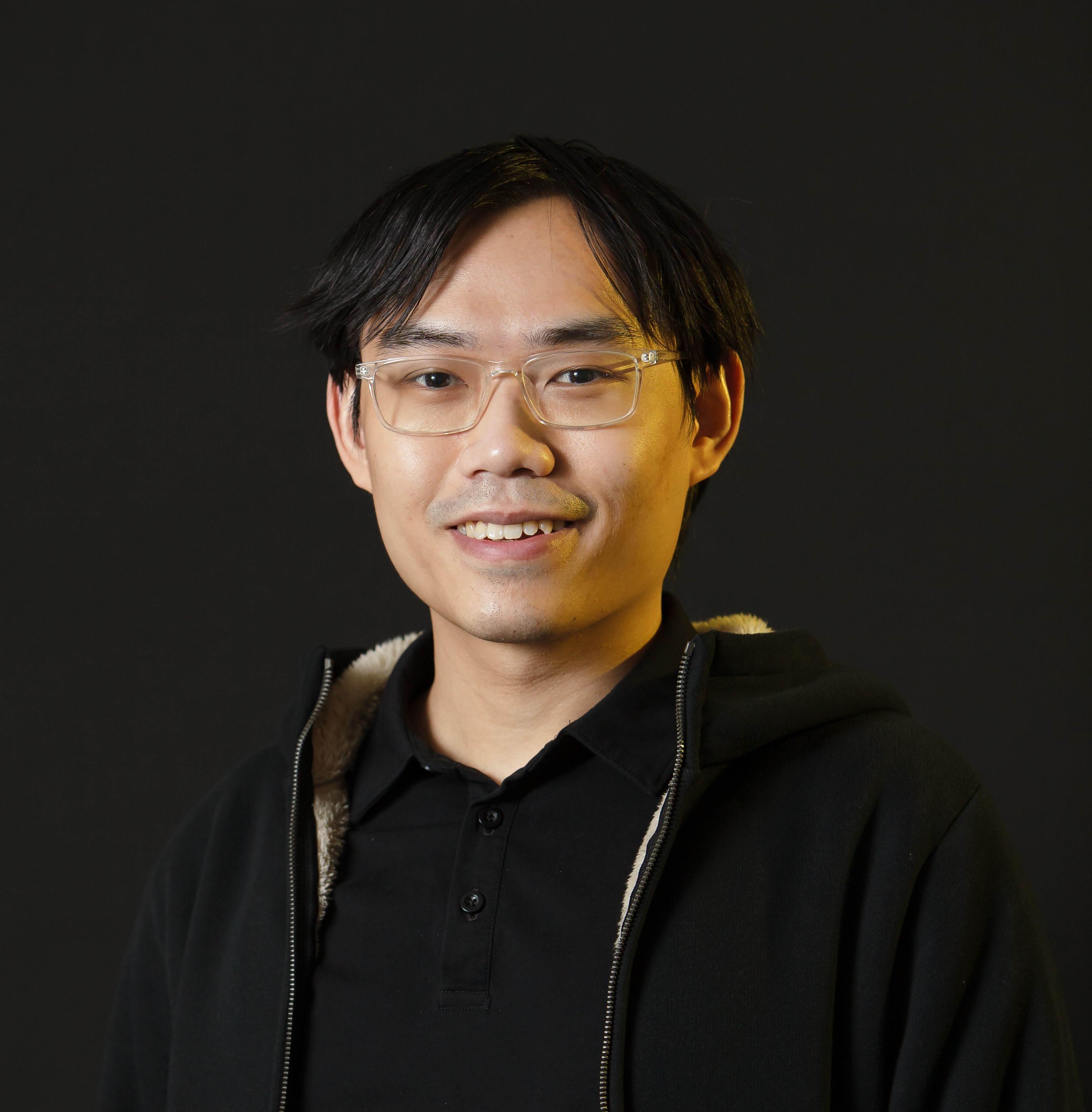

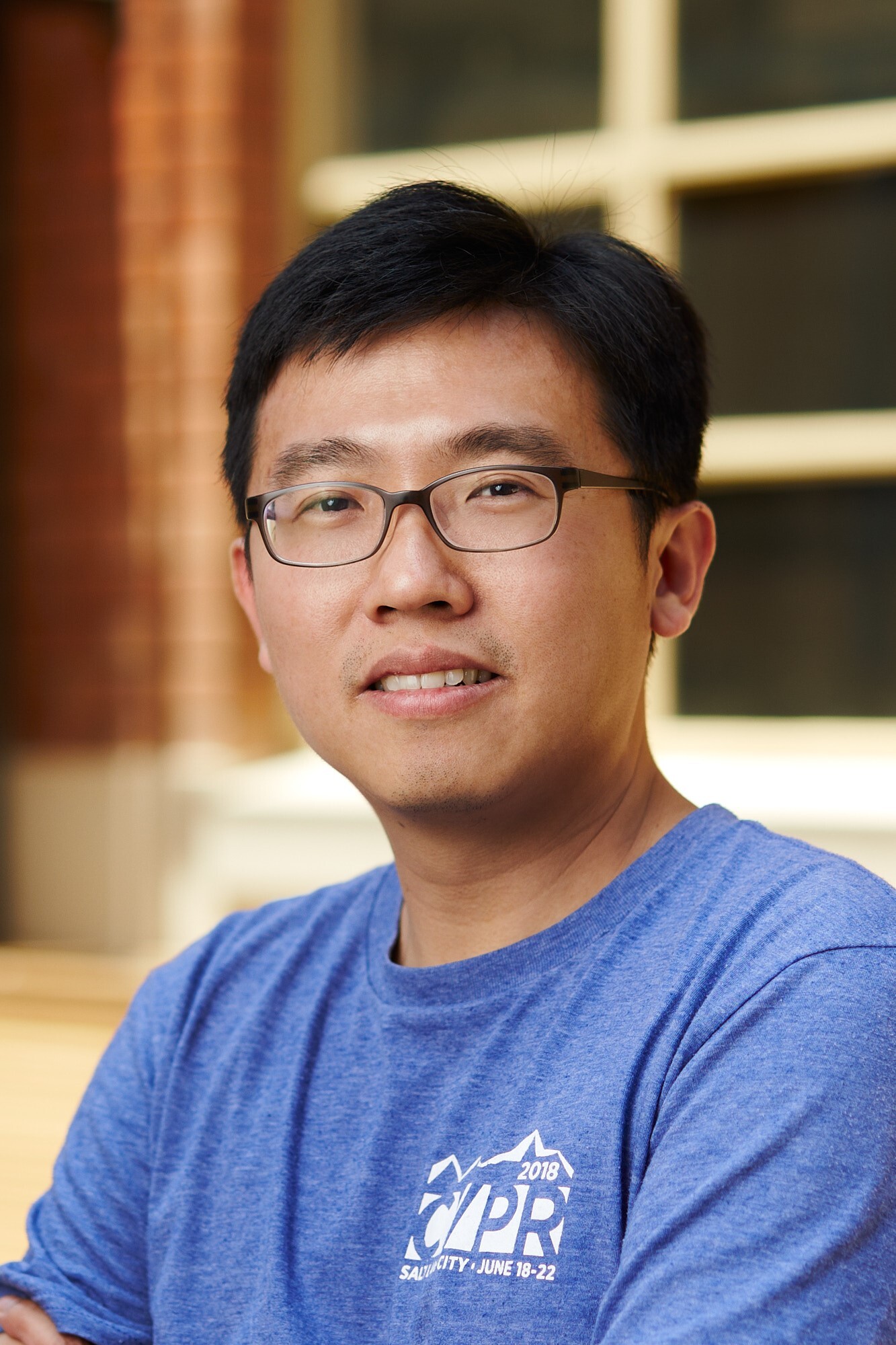

Tuan Anh Le

Tuan Anh Le is a postdoctoral associate in Josh Tenenbaum’s Computational Cognitive Science Lab in the Department of Brain and Cognitive Sciences at MIT. Previously, he was a PhD student in Frank Wood’s group in the Department of Engineering Science at the University of Oxford. He’s also interned at DeepMind, where he’s worked with Yee Whye Teh.

Probabilistic programming is a powerful abstraction layer for Bayesian inference, separating the modeling and inference. However, approximate inference algorithms for general-purpose probabilistic programming systems are typically slow and must be re-run for each new observation. Amortized inference refers to learning an inference network ahead of time, so that for each new observation, an approximate posterior can be quickly obtained. In the first part of the talk, I’ll talk about reweighted wake-sleep (RWS), which is a simple, yet powerful method for simultaneously learning model parameters and amortizing inference that supports discrete latent variables and doesn’t suffer from the “tighter bounds” effect. In the second part of the talk, I’ll talk about the thermodynamic variational objective (TVO) which is a novel objective for learning and amortized inference based on a connection between thermodynamic integration and variational inference. Both of these methods have traditionally been used for learning deep generative models, however they’re equally applicable to a wider class of models like probabilistic programs. Finally, these methods have a lot of connections with statistics: RWS can be viewed as adaptive importance sampling and the TVO is directly derived from thermodynamic integration which is often used for model selection via Bayes factors.